A specialist in the big data market should be able to do and code statistical and quantitative analyses. A solid understanding of mathematics and logical reasoning are also required.

A big data professional should be knowledgeable about various algorithms and data sorting techniques.

If you are preparing for a role in Big Data, then it'd be wise to list out the different technical and behavioral skills important for a role in this domain.

Technical Questions

1. How well-versed are you in the concept of "Big Data"?

Big Data refers to complicated and massive datasets. Big data operations require specialized tools and techniques because a relational database cannot handle such a large amount of data.

Big data enables businesses to gain a deeper understanding of their industry and assists them in extracting valuable information from the unstructured and raw data that is routinely gathered. Big data enables businesses to make more informed business decisions.

2. Explain the relationship between big data and Hadoop.

The terms "big data" and "Hadoop" are essentially interchangeable. Hadoop, a framework that focuses on big data operations, gained popularity along with the growth of big data. Professionals can use the framework to evaluate big data and assist organizations in decision-making.

3. How many steps are to be followed to deploy a Big Data solution?

There are three steps to be followed:

- Data Ingestion

- Data Storage

- Data Processing.

4. Explain all three steps involved to deploy a Big Data Solution?

Data ingestion, or the extraction of data from diverse sources, is the initial step in the deployment of a big data solution. A CRM like Salesforce, an ERP like SAP, an RDBMS like MySQL, or any other log files, documents, social media feeds, etc., could be the data source. Either batch jobs or real-time streams can be used to ingest the data. The obtained information is then kept in HDFS.

Data Storage: The Second Step in Deploying a Big Data Solution

The next step after data input is to store the extracted data. Either a NoSQL database or HDFS will be used to store the data (i.e. HBase). HBase is better for random read/write access, while HDFS storage is better for sequential access.

Data processing is the last stage of deploying a big data solution. One of the processing frameworks, such as Spark, MapReduce, Pig, etc.

5. What is the purpose of Hadoop in big data analytics?

Enterprises are dealing with a huge volume of structured, unstructured, and semi-structured data because data analysis has become one of the primary determinants of a company.

It might be challenging to analyze unstructured data, which is where Hadoop's skills come into play.

Hadoop is also open source and uses standard hardware. Consequently, it is a cost-benefit strategy for companies.

6. Describe FSCK.

Fsck is an acronym for file system check. It is a command that HDFS employs. This command is used to check for discrepancies and determine whether the file has any errors. For instance, HDFS is alerted by this command if any file blocks are missing.

7. What command should I use to format the NameNode?

"$ hdfs namenode -format"

8. Have you had any experience with big data? If so, kindly let us know.

How to Proceed: The question is subjective, thus there isn't a right or wrong response because it relies on your prior experiences. Inquiring about your prior experience during a big data interview will help the interviewer determine whether you are a good fit for the project's requirements.

So, how will you respond to the query? If you have prior experience, begin by discussing your responsibilities in a previous role before gradually introducing more information. Inform them of the ways in which you helped to make the project a success.

9. Do you prefer good data or good models? Why?

How to Proceed:

Although it is a difficult topic, it is frequently asked in big data interviews. You are prompted to select between good data or good models. You should attempt to respond to it from your experience as a candidate. Many companies want to follow a strict process of evaluating data, which means they have already selected data models. Good data can change the game in this situation. The other way around also works as a model is chosen based on good data.

10. Will you speed up the code or algorithms you use?

How to Proceed:

This question should always be answered in the affirmative. Performance in the real world is important and is independent of the data or model you are utilizing in your project.

11. How do you go about preparing data?

How to Proceed:

One of the most important phases in big data projects is data preparation. At least one question based on data preparation might be asked during a big data interview. The interviewer wants to know what processes or safety measures you use when preparing your data, which is why he asks you this question.

As you are already aware, data preparation is vital to obtain the appropriate data, which can then be utilized for modeling. This is the message you should provide to the interviewer. Additionally, be sure to highlight the kind of model you'll be using and the factors that went into your decision. In addition, you should go through key terms for data preparation such as converting variables, outlier values, and unstructured data.

12. How could unstructured data be converted into structured data?

How to Proceed:

In large data, unstructured data is particularly prevalent. To achieve accurate data analysis, the unstructured data should be converted into structured data. By quickly contrasting the two, you might begin by responding to the question.

Once finished, you can now talk about the techniques you employ to change one form into another. You could also describe the circumstance in which you actually did it. You can post information about your academic projects if you recently graduated.

13. What kind of hardware setup is best for Hadoop jobs?

For conducting Hadoop operations, dual processors or core machines with a configuration of 4 or 8 GB RAM and ECC memory are appropriate. However, the hardware needs to be customized in accordance with the project-specific workflow and process flow because it fluctuates.

14. What takes place when two users attempt to access the same file in the HDFS?

HDFS NameNode supports exclusive write only. As a result, the second user will be rejected and only the first user will be granted access to the file.

15. What distinguishes "HDFS Block" from "Input Split"?

For processing, the HDFS physically separates the input data into units known as HDFS Blocks.

Input Split is a logical division of data by mapper for mapping operation.

16. Where does Big Data come from and what does it mean? How does it function?

Big Data refers to substantial and frequently complex data collections that are so large that they are impossible to manage with traditional software solutions. Big Data consists of organized and unstructured data sets, including websites, multimedia, audio, video, and photo information.

Businesses can gather the data they require in a variety of methods, including:

- cookies on websites

- email monitoring

- Smartphones

- Smartwatches

- Forms of online transactions

- interactions with websites

- Transactional records

- posts on social media

- Companies that collect and market customer and valuable data are known as third-party trackers.

Three groups of tasks are involved in working with large data:

Integration is the process of combining data, frequently from multiple sources, and transforming it into a format that can be analyzed to offer.

Management: Big data needs to be kept in a repository that can be easily accessed and gathered. Big Data is primarily unstructured, making it unsuitable for traditional relational databases, which require data in a formatted table and row format.

Analysis: The investment return from big data includes a variety of valuable market insights, such as information on consumer preferences and purchasing trends. These are demonstrated by using tools powered by AI and machine learning to analyze huge data sets. transforming it into a format that can be examined to offer.

17. What are the five pillars of big data?

Volume: The volume is reflected in the size of the data that is kept in data warehouses. Since there is a chance that the data will reach arbitrary heights, it must be reviewed and processed. which could be between terabytes and petabytes or more.

Velocity: Velocity basically describes how quickly real-time data is generated. Imagine the number of Facebook, Instagram, or Twitter postings that are created per second, for an hour or longer, to provide a straightforward illustration of recognition.

Variety: Big Data consists of data that has been acquired from various sources and is structured, unstructured, and semi-structured. This diverse range of data necessitates the use of distinct and suitable algorithms, as well as extremely varied and specific analyzing and processing procedures.

Veracity: In a simple sense, we can describe data veracity as the caliber of the data examined. It pertains to how trustworthy the data is in general.

Value: Unprocessed data has no purpose or meaning but can be transformed into something worthwhile. We can glean knowledge that is useful.

18. Why big data is being used by corporations to gain a competitive edge?

Regardless of the company's divisions or breadth, data is now a crucial instrument that organizations must use. Big data is widely used by businesses to get an advantage over rivals in their industry.

One step in the big data process is auditing the datasets a business acquires. Big data experts also need to understand what the business expects from the application and how they intend to use the data.

Decision-making with confidence: Analytics strives to foster decision-making, and big data endures to support this. Due to the abundance of data available, big data can assist businesses in making decisions more quickly while maintaining the same level of certainty. Moving quickly and responding to larger trends and operational changes today is a big commercial advantage in a fast-paced environment.

Asset optimization: Big data indicates that companies can have individual-level control over their assets. This suggests that they can effectively optimize assets based on the data source, increase production, increase the lifespan of equipment, and minimize any necessary downtime for specific assets. Guaranteeing the company is making the most of its resources and ties with falling costs, gives it a competitive advantage.

Cost reduction: Big data can assist companies in cutting costs. Data gathered by businesses can assist them in identifying areas where cost savings can be made without having a negative impact on business operations, from analyzing energy usage to evaluating the effectiveness of staff operating patterns.

Improve Customer Engagement: In order to establish and adapt consumer dialogue, which might subsequently be translated into higher sales, consumers make confident choices while responding to online surveys about their decisions, habits, and tendencies. Understanding each customer's preferences through the data gathered on them allows you to target them with certain products while also providing the personalized experience that many modern consumers have grown to expect.

Identify fresh sources of income: Analytics can help businesses find new sources of income and diversify their operations. For instance, understanding consumer patterns and choices enables businesses to choose their course of action. The data that firms gather may also be sold, adding new revenue sources and the opportunity to form commercial partnerships.

20. Explain the importance of Hadoop technology in Big data analytics.

The amount of organized, semi-structured, and unstructured data that make up big data makes it a challenging undertaking to analyze and handle. A device or piece of technology was required to aid in the quick processing of the data. Hadoop is therefore utilized as a result of its processing and storage capabilities. Hadoop is also an open-source piece of software.

Cost considerations are advantageous for company solutions.

Its widespread use in recent years is mostly due to the framework's ability to disperse the processing of large data sets via cross-computer clusters employing straightforward programming paradigms.

21. Describe the attributes of Hadoop.

Hadoop helps with both data storage and big data processing. It is the most dependable method for overcoming significant data obstacles. Some key characteristics of Hadoop include:

Distributed Processing: Hadoop facilitates distributed data processing, which results in faster processing. MapReduce is responsible for the parallel processing of the distributed data that is collected in Hadoop HDFS.

Open Source - Because Hadoop is an open-source framework, it is free to use. The source code may be modified to suit the needs of the user.

Fault Tolerance: Hadoop has a high level of fault tolerance. It makes three replicas at different nodes by default for each block. This replica count might be changed based on the situation. Therefore, if one of the nodes fails, we can retrieve the data from another node. Data restoration and node failure detection are done automatically.

Scalability: We can quickly reach the new device even though it has different hardware.

Reliability: Hadoop stores data in a cluster in a secure manner that is independent of the machine. So, any machine failures have no impact on the data saved in the Hadoop environment.

22. What distinguishes HDFS from conventional NFS?

A protocol that enables users to access files over a network is called NFS (Network File System). Even if the files are stored on a networked device's disc, NFS clients would provide access to them as if they were local.

A distributed file system, such as HDFS (Hadoop Distributed File System), is one that is shared by numerous networked computers or nodes. Because it stores several copies of files on the file system—the default replication level is three—HDFS is fault-tolerant.

Replication/Fault Tolerance is the key distinction between the two. Failures were designed to be tolerated by HDFS. There is no built-in fault tolerance

23. What exactly is data modeling and why is it necessary?

The IT industry has been using data modeling as a business model for many years. The data model is a method for arriving at the diagram by thoroughly understanding the data in question. The process of visualizing the data allows business and technology experts to comprehend the data and comprehend how it will be used.

The benefits of data modeling

- Companies can gain a variety of advantages from data modeling as part of their data management, including:

- You've cleansed, arranged, and modeled your data to project what your next step should entail before you ever establish a database. Databases are constrained, prone to errors, and poorly designed due to data modeling, which also improves data quality.

- Data modeling creates a visual representation of the flow of your planned data organization. Employee understanding of data trends and their role in the data management puzzle is aided by this. Additionally, it fosters data-related communication between organizational departments.

- Data modeling makes it possible to create databases with greater depth, which leads to the development of future applications and data-based business insights.

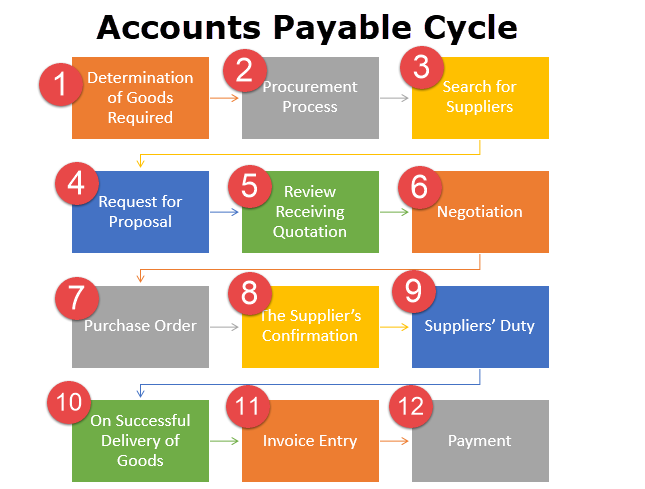

24. How is a big data model deployed? Mention the essential procedures

The three basic steps involved in deploying a model onto a big data platform are,

- Data Ingestion

- Data Storage

- Data Processing

Data ingestion: This process entails gathering data from various platforms, including social media, corporate applications, log files, etc.

Data Storage: After data extraction is finished, storing the vast volume of data in the database presents a challenge. Here, the Hadoop Distributed File System (HDFS) is crucial.

Data processing: After storing the data in HDFS or HBase, the next step is to use specific algorithms to analyze and visualize this massive amount of data for better data processing. Again, using Hadoop, Apache Spark, Pig, etc. will make this work simpler

After carrying out these crucial actions, one can successfully install a big data model.

25. Describe fsck.

File System Check, or fsck, is a term used by HDFS. It is used to look for discrepancies and determine whether the file has any problems. For instance, HDFS receives a report with this command if any file blocks are missing

26. Mention the usual Hadoop input formats

Hadoop's typical input formats are:

Text Input Format: Hadoop uses this as its default input format.

Key Value Input Format: Hadoop uses the Key-Value Input Format to read plain text files.

Sequence File Input Format: Hadoop reads files in a succession using the Sequence File Input format.

27. What are the different big data processing techniques?

Big Data processing methods analyze big data sets at a massive scale. Offline batch data processing is typically full power and full scale, tackling arbitrary BI scenarios. In contrast, real-time stream processing is conducted on the most recent slice of data for data profiling to pick outliers, impostor transaction exposures, safety monitoring, etc. However, the most challenging task is to do fast or real-time ad-hoc analytics on a big comprehensive data set. It substantially means you need to scan tons of data within seconds. This is only probable when data is processed with high parallelism.

Different techniques of Big Data Processing are:

- Batch Processing of Big Data

- Big Data Stream Processing

- Real-Time Big Data Processing

- Map Reduce

28. How does Hadoop's Map Reduce work?

Hadoop A software architecture called MapReduce is used to process huge data sets. It is the primary part of the Hadoop system for processing data. It separates the incoming data into different components and executes a program on each piece of data in parallel. The terms "MapReduce" refer to two distinct jobs. The first is the map operation, which turns a set of data into a varied collection of data in which individual components are separated into tuples. The key-based data tuples are combined via the reduced operation, which also changes the key's value.

29. Mention Reducer's primary methods.

A Reducer's primary methods are:

Setup(): this is a method that is only used to set up the reducer's various arguments

Reduce: The primary function of the reducer is reduce(). This method's specific purpose is to specify the task that needs to be completed for each unique group of values that share a key.

Cleanup: After completing the reduce() task, cleaning() is used to clean up or destroy any temporary files or data.

30. How can you skip bad records in Hadoop?

Hadoop can provide an option wherein a particular set of lousy input records could be skipped while processing map inputs. SkipBadRecords class in Hadoop offers an optional mode of execution in which the bad records can be detected and neglected in multiple attempts. This may happen due to the presence of some bugs in the map function. The user has to manually fix it, which may sometimes be possible because the bug may be in third-party libraries. With the help of this feature, only a small amount of data is lost, which may be acceptable because we are dealing with a large amount of data.

31. Describe Outliers.

Outliers are data points that are extremely dispersed from the group and do not belong to any clusters or groups. This could have an impact on how the model behaves, cause it to forecast the wrong outcomes, or make them exceedingly inaccurate. As a result, outliers must be handled cautiously because they may reveal useful information. These outliers may cause a Big Data model or a machine learning model to be inaccurate. These outcomes could be,

- poor outcomes

- reduced precision

- Extended Training

32. How does data preparation work?

The practice of cleaning and changing raw data before processing and analysis is known as data preparation. Prior to processing, this critical stage frequently entails reformatting data, making enhancements, and combining data sets to enrich data.

For data specialists or business users, data preparation is a never-ending effort. However, it is crucial to put data into context in order to gain insights and then be able to remove the biased results discovered as a result of bad data quality.

For instance, standardizing data formats, improving source data, and/or removing outliers are all common steps in the data construction process.

33. What is Distcp?

It is a tool used for concurrent data copying to and from Hadoop file systems with very huge amounts of data. Its distribution, error handling, recovery, and reporting are all impacted by MapReduce. A list of files and directories is expanded into a series of inputs to map jobs, each of which copies a specific subset of the files listed in the source list.

34. Describe the main elements of Hadoop

An open-source framework called Hadoop is designed to store and handle large amounts of data in a distributed fashion.

The Key Elements of Hadoop

Hadoop's main storage system is HDFS (Hadoop Distributed File System): The vast amount of data is kept on HDFS. It was primarily designed for storing enormous datasets on inexpensive technology.

Hadoop Map Reduce: Hadoop's MapReduce layer is in charge of handling data processing. It submits a request for the processing of already-stored structured and unstructured data in HDFS. By dividing up the data into separate jobs, it is responsible for the parallel processing of a large amount of data. Map and Reduce are the two phases of processing. A map is a stage where data blocks are read and created, to put it simply.

YARN: YARN is the processing framework used by Hadoop. YARN manages resources and offers a variety of data processing engines, including real-time streaming, data science, and batch processing.

35. What are the different Output formats in Hadoop?

The different Output formats in Hadoop are -

- Textoutputformat

- Mapfileoutputformat

- DBoutputformat

- Sequencefileoutputformat

- SequencefileAsBinaryoutputformat

.jpg)

.jpg)